Setting your Gas Limit on Monad

On Monad, transaction fees are charged based on the transaction's declared gas_limit, rather than on the actual gas_used post execution. Fees are therefore determined by the upfront specified gas, regardless of how much gas is ultimately consumed. This is one of several changes to Monad's transaction fee mechanism relative to Ethereum's design; the base fee update rule and its implications were discussed previously. In this blog post, we analyze how charging on gas_limit affects gas limit selection in practice and provide guidance on setting tighter limits without increasing failure rates.

Monad uses delayed or asynchronous execution to substantially increase execution throughput. In this context, charging fees on the gas_limit mitigates denial-of-service attacks and discourages certain types of economically inefficient transactions (such as extraction of optimistic MEV). Further details are given in the Monad specification document.

While setting the gas_limit too high on Ethereum is largely harmless, on Monad, setting the limit too high results in higher fees and unnecessary deadweight loss on the chain. On both Ethereum and Monad, setting gas_limit too low risks execution failure.

In the remainder of this post, we examine how users and applications set gas limits on Monad in practice, comparing this behavior to Ethereum and Base (a high-throughput Layer-2), and explore strategies for tightening gas limits without meaningfully increasing failure rates.

Gas Charged on Monad

On Ethereum and other EVM chains such as Base, users face little downside from setting overly generous gas_limit values. The transaction fee is charged on the actual gas_used, so overestimating the limit has no direct cost. As a result, it is common to see transactions with unrealistic gas limits. For example, we observe transactions on Ethereum that declare a gas_limit of 5,000,000 for a simple transfer that ultimately consumes only 21,000 gas—an overestimation by more than a factor of 200. While unnecessary, this behavior is effectively harmless for the transaction submitter on Ethereum.

On Monad, however, the situation is different. Execution is delayed, so the gas actually used by a transaction cannot be known at the time of its scheduling. Transactions are therefore currently scheduled based on their self-declared gas_limit, which also determines how much of the block limit they reserve.

- Since fees are charged on

gas_limit, overestimating this quantity leads to overpaying relative to the minimum amount that would have been needed. Setting the gas limit excessively high also means reserving block capacity that is never actually used, creating deadweight that drags on the chain. Under congestion, this deadweight reduces the effective throughput by crowding out other transactions, even when the block’s actual execution load could be relatively small. - At the same time, setting the gas limit too low is also undesirable. If a transaction’s execution exceeds its declared

gas_limit, it fails, and the sender is still on the hook for the gas consumed up to that point (on Monad, this is thegas_limit). The transaction must then be resubmitted with a higher limit, incurring additional fees and delay.

As a result, there is a meaningful trade-off to be made with the gas limit selection on Monad. Users, wallets, and applications must balance the risk of underestimating gas against the cost of overpaying.

Gas Used and Limit on Monad vs Ethereum vs Base

Monad has been live for more than two months—long enough for users and applications to begin adapting to the new system design around gas limits. This provides us with data to compare transaction behavior across chains. In particular, we compare how transactions set their gas limits on Ethereum, Base, and Monad.

In the table below, we show summary statistics for Ethereum, Base, and Monad covering the period from January 12 through January 18, 2026. We make two significant observations. First, Ethereum L1 has a lower transaction failure rate (0.9%) than both Base (9.9%) and Monad (6.0%). This difference may be partly explained by the fact that transactions are cheaper on Base and Monad than on Ethereum L1, which could lead to the submission of more speculative transactions. But it is also important to note that most Ethereum blocks are produced by builders, many of whom offer revert protection, which can further reduce observed on-chain failure rates.

Importantly, only 0.58% of transactions in our Monad sample fail due to running out of gas; the remaining failures are due to other execution errors. This indicates that users do not frequently underestimate the gas required for their transactions, despite fees being charged on the declared gas_limit.

Next, how many users are overpaying? We examine unused gas, defined as 1 - gas_used / gas_limit.¹ This measures the fraction of the declared gas limit that goes unused. On Monad, where fees are charged on the declared limit, unused gas translates directly into overpayment. On Ethereum and Base, it instead captures how loosely gas limits are set relative to actual consumption.

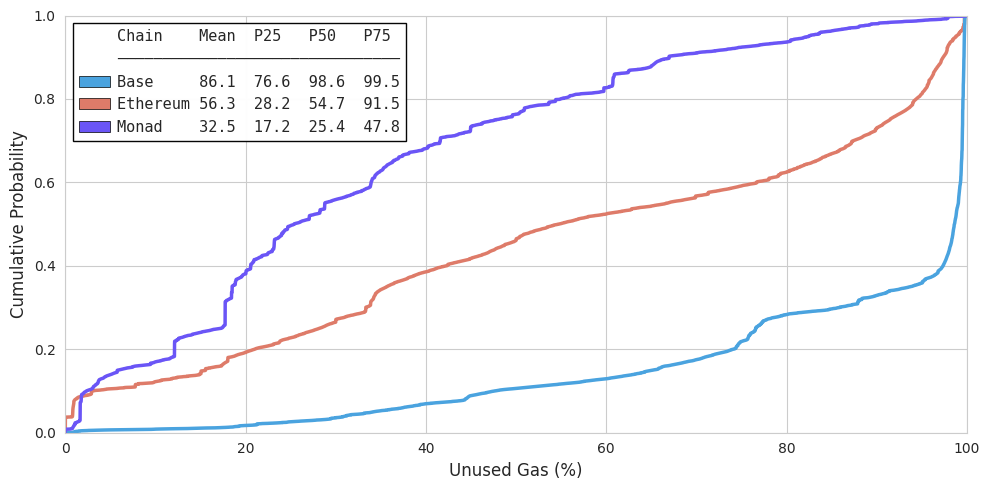

The figure below shows the gas-weighted cumulative distribution of unused gas across Ethereum, Base, and Monad. For each transaction, unused gas is defined as:

$$\texttt{unused_gas} = 1 - \frac{\texttt{gas_used}}{\texttt{gas_limit}}$$

A value of 0% means the transaction used exactly its declared limit; higher values indicate more excess capacity reserved. The CDF is weighted by each transaction's gas_limit, so that the cumulative probability at unused gas level $x$ represents the fraction of total declared gas with unused gas at or below $x$:

$$F(x) = \frac{\sum_i \text{gas_limit}_i \cdot \mathbf{1}[\text{unused_gas}_i \leq x]}{\sum_i \text{gas_limit}_i}$$

Overall, unused gas is lowest on Monad across most of the distribution, indicating that transactions on Monad reserve substantially less excess gas than on Ethereum or Base. For example, the median unused gas on Monad is 25.4%, compared to 54.7% on Ethereum and 98.6% on Base. At the 75th percentile, three-quarters of gas-weighted transactions on Monad have unused gas below 47.8%, while on Ethereum and Base this figure rises to 91.5% and 99.5% respectively.

Base stands out with consistently high unused gas: over 75% of gas-weighted transactions declare limits more than 76% above what they actually consume, and the mean unused gas is 86.1%. Ethereum falls in between, with a mean of 56.3%. Monad's mean unused gas of 32.5% reflects the stronger incentive to set tight gas limits when fees are charged on the declared amount.

Taken together, the figure shows that transactions on Monad tend to reserve substantially less excess gas than on Ethereum and especially Base.

Setting Gas Limits on Monad

The data shows the ecosystem is already adapting: unused gas on Monad is significantly lower than on its peers, yet "out-of-gas" errors remain rare. But is there still room for improvement? Let’s look at how to tighten gas limits even further to increase efficiency without increasing transaction failure rates.

Assuming one is about to submit a transaction at time $t$, a natural first step is to call eth_estimateGas, which simulates the transaction against the current blockchain state and returns the minimum gas amount under which it would succeed in that state. However, the state used for estimation may differ from the state at which the transaction is ultimately executed. Transactions included in the interim can change the execution path and, in turn, gas consumption.

To reason about this execution uncertainty, we use state lookbacks (LB). A lookback measures the gap between the state used for estimation and the state at actual execution time. If the transaction is included in block N:

- LB0: Estimating against the state at the end of block N−1 (the block immediately preceding inclusion).

- LB1–LB30: Estimating against progressively older states, where LBX corresponds to the state at the end of block N−(X+1).

Larger lookbacks correspond to estimating against older state, which increases the probability of state drift and gas estimation differences. If the state drifts far enough, a transaction that may have succeeded at LB0 may simply become invalid.

We track the share of transactions that continue to produce a valid estimate as coverage. The table below shows how coverage evolves for our dataset of 7,661,298 successful transactions as the estimation state becomes more stale.

This decay reflects a simple reality: once the state drifts far enough, some transactions can no longer be simulated and estimation fails altogether. In short, as lookback increases, coverage decreases.

But coverage is only the first hurdle. Even when a transaction remains valid at older lookbacks, the amount of gas it ends up using often changes as state drifts. Since eth_estimateGas is just using a snapshot of a single state, directly setting the resulting number as the gas limit may underestimate the actual gas needed at execution time. In practice, one would need a buffer: a safety margin that accounts for possible state changes so that it bridges the gap between estimation and execution.²

A simple rule: add a fixed % buffer

The simplest approach is to always apply a static buffer of +x% on top of the estimate, i.e.

gas_limit = eth_estimateGas * (1 + x%).

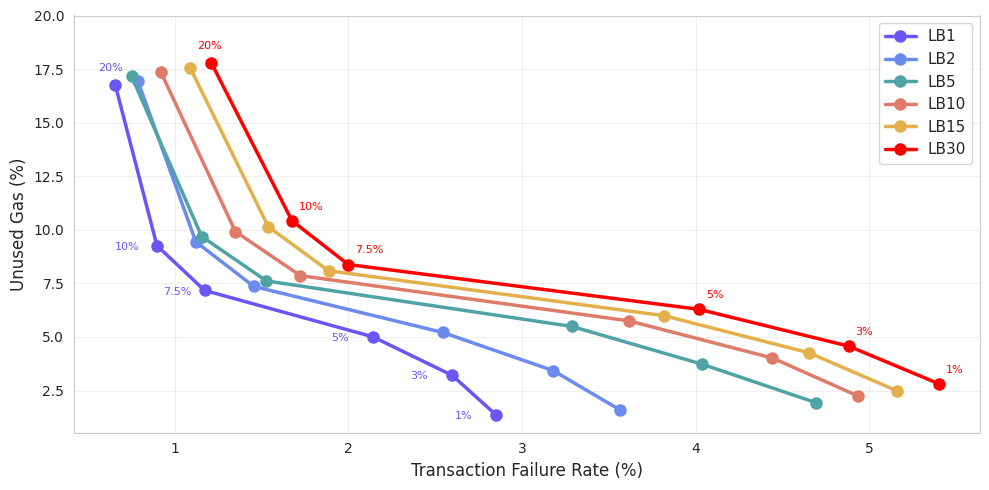

The figure below shows how fixed buffers trade off transaction failure rate (horizontal axis) against unused gas (vertical axis, gas-weighted) on Monad, for several state lookbacks (LB1, LB2, LB5, LB10, LB15, and LB30). With a block time of roughly 400ms, these correspond to about 0.4s, 0.8s, 2s, 4s, 6s and 12s of stale state at the time the estimate is made. Larger lookbacks therefore mean simulating the transaction on older state, resulting in more uncertainty on a transaction's execution.

Intuitively, lower unused gas means less overpayment, while lower failure rates mean fewer transactions that fail to execute with the buffered estimate. Note that our failure and unused gas metrics are approximations rather than exact measurements of live behavior (see footnote³). Each point is labeled with the applied buffer percentage.

When interpreting these results, it is worth noting that not all lookbacks would correspond to equally representative behavior of real users. Most wallets estimate gas and submit transactions close to execution, so shorter lookbacks such as LB1, LB2, and LB5 are more likely to line up with typical transaction lifecycles. Longer lookbacks like LB30, roughly 12 seconds, are probably uncommon in practice, but they are useful as a stress test to illustrate how estimation accuracy ultimately degrades as state becomes increasingly stale.

Finally, these lookbacks should be interpreted in execution terms. Because Monad produces blocks very frequently, a given lookback corresponds to many possible interim state changes (possibly affected by the large amount of gas used since estimation). How much this matters in practice depends on the network load and transaction mix, which may change as usage on Monad evolves.

As expected, smaller buffers (bottom-right of the chart) lead to very low unused gas but also higher failure rates. Failure rates rise steadily as the lookback increases, reflecting growing estimation error when using stale state. For example, with a 1% buffer, unused gas remains very low at around 1–3%, but failure rates increase from roughly 2.9% at LB1 to about 5.4% at LB30.

Increasing the buffer to 7.5% appears to result in the largest consistent improvement (reduction) in transaction failure rate. At this level, failures range from about 1.2% at LB1 to 2.0% at LB30, while unused gas remains relatively stable at about 7–9%. Further increasing the buffer to 10% lowers failure rates even more to roughly 0.9–1.7%, but unused gas rises into the 9–11% range.

Overall, for any fixed buffer, larger lookbacks consistently lead to higher failure rates and slightly higher unused gas. As previously explained, this is due to estimates computed against older state being less informative about the actual execution environment the transaction will ultimately encounter. As a result, underestimation becomes more likely, even when the same buffer is applied.

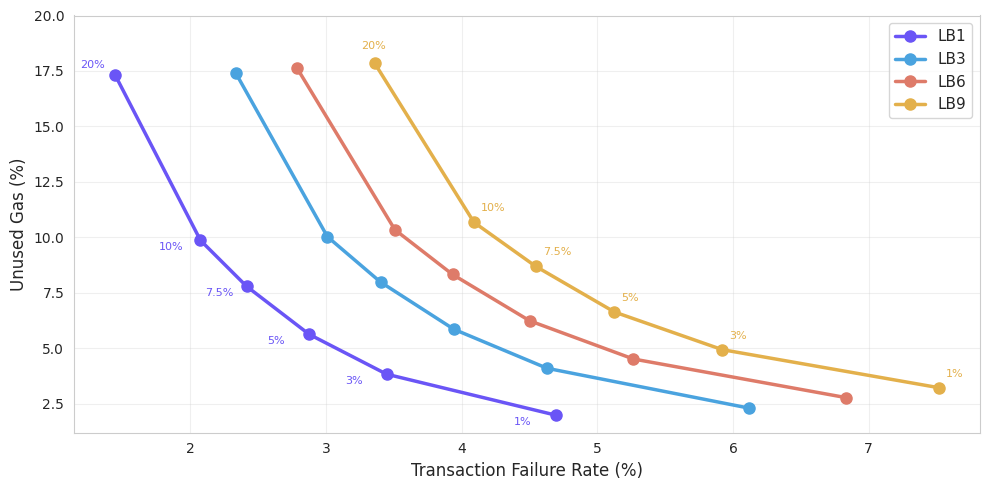

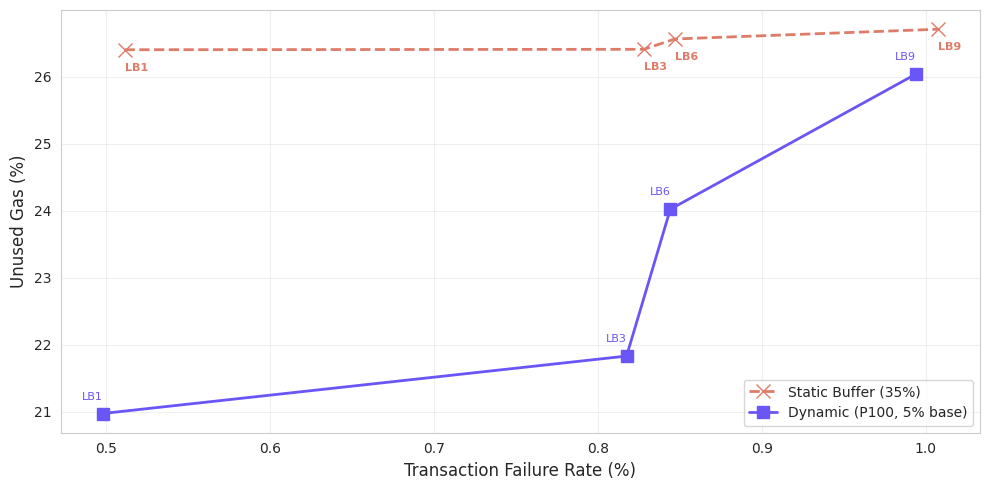

We repeat the same static buffer analysis on Base—a longstanding high-throughput EVM L2—to see how the tradeoff between unused gas and failure rate looks in a different execution environment. Using lookbacks LB1, LB3, LB6, and LB9 (roughly 2s, 6s, 12s, and 18s of stale state), we observe the same qualitative pattern: longer lookbacks increase failure rates, and larger buffers reduce failures at the cost of higher unused gas.

Overall, static buffers trace a clear Pareto frontier between unused gas and execution risk, with state lookback determining where a transaction falls on that curve. Fixed margins are simple and predictable, but they apply uniform headroom regardless of transaction variability. This naturally motivates adaptive buffering strategies that allocate gas more selectively.

A dynamic buffer

The previous approach is simple and does not require any knowledge about the specifics of a transaction’s gas usage and access patterns. Can we do better with more contextual information? The goal is to tighten gas limits as much as possible—lowering unused gas—without materially increasing the failure rate.

This is the idea behind the dynamic buffer. Instead of applying the same buffer to every transaction, we try to adapt the buffer based on how similar transactions have behaved in the recent past.

Concretely, we group transactions by receiver address and function selector. For each group, we look at a rolling six-hour window and compare gas estimates computed at an earlier state lookback LBX to the corresponding estimates at LB0. This allows us to measure, ex post, how much additional gas would have been required to safely use the LBX estimate without underestimating relative to LB0.

From these historical differences, we compute empirical percentiles of the required buffer. These percentiles are then used to set the buffer for future transactions with the same receiver and function selector.

Buffer construction

For each transaction, we compute a transaction-specific buffer based on three cases:

Finally, the resulting buffer is clipped to lie between 0% and 50%, and the gas limit is set as:

$$\text{gas_limit} = \text{eth_estimateGas} \times \left(1 + \text{buffer}\right)$$

This approach allows the buffer to vary across transactions, allocating tighter limits to predictable interactions while remaining conservative where uncertainty is higher.

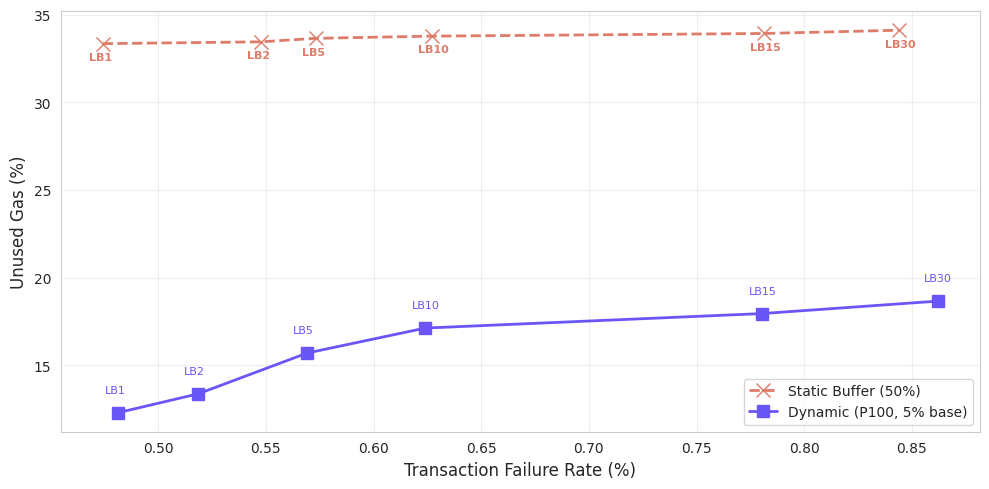

Let’s see how the dynamic buffer compares to its static counterpart. Consider the dynamic strategy with a 5% fallback. To find a fair static baseline, we select the static buffer that approximately matches the dynamic approach's failure rate—this turns out to be a 50% buffer. Notably, the dynamic approach achieves substantially lower unused gas, making a strong case for the dynamic-margin approach. More specifically: across lookbacks, the static buffer remains near 33-34% unused gas, whereas the dynamic approach ranges from roughly 12% to 19% unused gas at lower failure rates, depending on the lookback. Additionally, this transaction failure rate roughly matches the current out-of-gas failure rates observed on Monad, while still achieving significantly lower unused gas.

We repeat the same dynamic-buffer construction on Base as well, using a 5% fallback and comparing it to a static baseline calibrated to achieve a similar failure rate (roughly 35%). For the dynamic versus static comparison, we exclude transactions whose behavior distorts gas estimation, specifically ERC-4337 entry points, optimistic MEV contracts, and silent-failure pairs, ensuring the comparison reflects genuine estimation drift.⁴

As on Monad, the dynamic strategy achieves materially lower unused gas (gas-weighted) at comparable reliability levels. While the static buffer remains around 26% unused gas across lookbacks, the dynamic approach ranges from roughly 21% at short lookbacks to about 26% at longer ones. The gap is largest at small lookbacks, around 5 percentage points at LB1, and narrows as state staleness increases.

On both Monad and Base, dynamic buffering achieves lower unused gas at comparable failure rates by allocating headroom selectively. Beyond motivating a general-purpose strategy, the dynamic buffering results indicate that application developers with transaction-specific context can encode tighter gas limits directly to further increase utilization rates.

Conclusion

Charging fees based on the pre-declared gas_limit changes how gas limits should be set. On Monad, gas limits are no longer a parameter that can be set crudely without any consequence. They directly determine both the fee paid by the sender and how much block capacity a transaction reserves at scheduling time.

Mainnet data shows that users, wallets, and applications have already adapted to this change. Despite gas being charged on the declared limit, out-of-gas transaction failure rates on Monad remain very low, while unused gas is substantially lower than on Ethereum and Base. This indicates that gas limits are being set more tightly in practice, reducing overpayment and limiting deadweight block usage.

At the same time, gas estimation is inherently sensitive to the state against which the transaction is simulated. As a result, gas limit selection is best viewed as a trade-off between minimizing unused gas and minimizing execution failure risk. Simply adding a fixed buffer to the estimate already performs well. By using additional context from recent execution history, a dynamic buffer can allocate headroom more adaptively based on transaction type to significantly reduce overestimation at comparable failure rates. Further, it serves as a motivation for heterogeneous, application-dependent gas limit settings, even though a general-purpose mechanism is not always required when applications already have transaction-specific context.